A while ago, I got my hands on some NVIM config files from a friend. I copied the files to my NVIM config directory and opened NVIM, excited to see how cool my editor was going to look... until I was met with a bunch of � characters all over my screen. So, I told my friend about it, and he suggested I install nerd fonts. I did, and everything seemed to work out well... UNTIL I had to reset all my configs, and the problem popped up again. However, this time I decided to do a little digging into why it was actually happening.

This brought back lots of unanswered questions in my mind about character encodings, like how the change in character limit in text input for emojis varies from the one for normal characters or why I have to write “Content-Type: text/html; charset=utf-8” in HTTP headers or what UTF is to begin with and why there are so many versions of it. To get answers to those questions, I began looking for resources, and I was able to find some useful information from some books and videos.

Every resource I came across had one thing in common, They all began with explaining the history of character encoding and almost all mentioned the chaotic situation in Japan. so I will do the same since the history is essential to understanding the need for all this but I will try to keep it short.

ASCII was a standard created for encoding english characters and some symbols into 7-bit representation. Before ASCII there weren’t many consistent digital encoding standards so we will start from here. The standard introduced mappings for 128 characters stored in 7 bits, meaning the high bit was left unused since most computers used 8-bit bytes then. This encoding was good enough for english characters and you’d have 1 more bit (128 possible characters ) to use for the characters the were not represented originally. Soon many countries started using the 1 bit to map their own native characters and adapting their own standard. Some countries, that have more alphabets than it can be fitted in 8 bits, had to resort to much more sophisticated encoding systems like the double byte character set(DBCS). These different encoding standards were called codepages and depending on what codepage you’re using you might get different representation for the same byte sequence, . For example if you were to send email from Germany to the United states and you content includes any native German characters they would be displayed as �, or be random Japanese letters if you send the email to Japan. You can check this in Microsoft Notepad were it allows you to change the preferred codepage, it’s set to unicode(utf-8) by default.

To solve this problem a new standard called Unicode was introduced. Unicode aimed to offer representation to every possible letter in the world in 16 bit code points , 65535 possible characters ( it’s actually close to 1.2 million characters now), keeping the first 128 mappings from ASCII as they are.

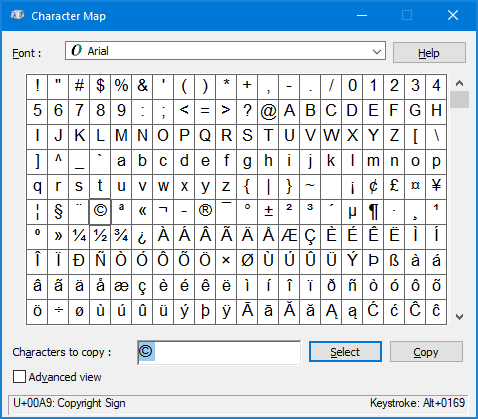

In Unicode, a code point is expressed in the form "U+1234" where "1234" is the assigned number in hex. For example, the character "A" is assigned a code point of U+0041. Code points are weird to understand, at least they were for me. They are an abstract representation that are neither understood by humans nor computers directly. In Order to be understood by humans they have to be mapped into character glyphs and they have to be encoded for them to be stored in the computer.

If you’re familiar with the Go programming language, There’s a terminology used for unicode code points, “runes”, an alias for int32 type.

Encodings map the unicode code points to a value that a computer can interpret and save (byte sequence). One way of unicode encoding is by mapping every code points to 16 bits of memory space (also called UTF-16 or USC-2 encoding), Double the size required for ascii characters. Even letters that fall into the 8 bits representation region take up 16 bits meaning the first 8 bits would be 0. If you mostly work with ASCII characters This wouldn’t be desirable. A better alternative would be UTF-8.

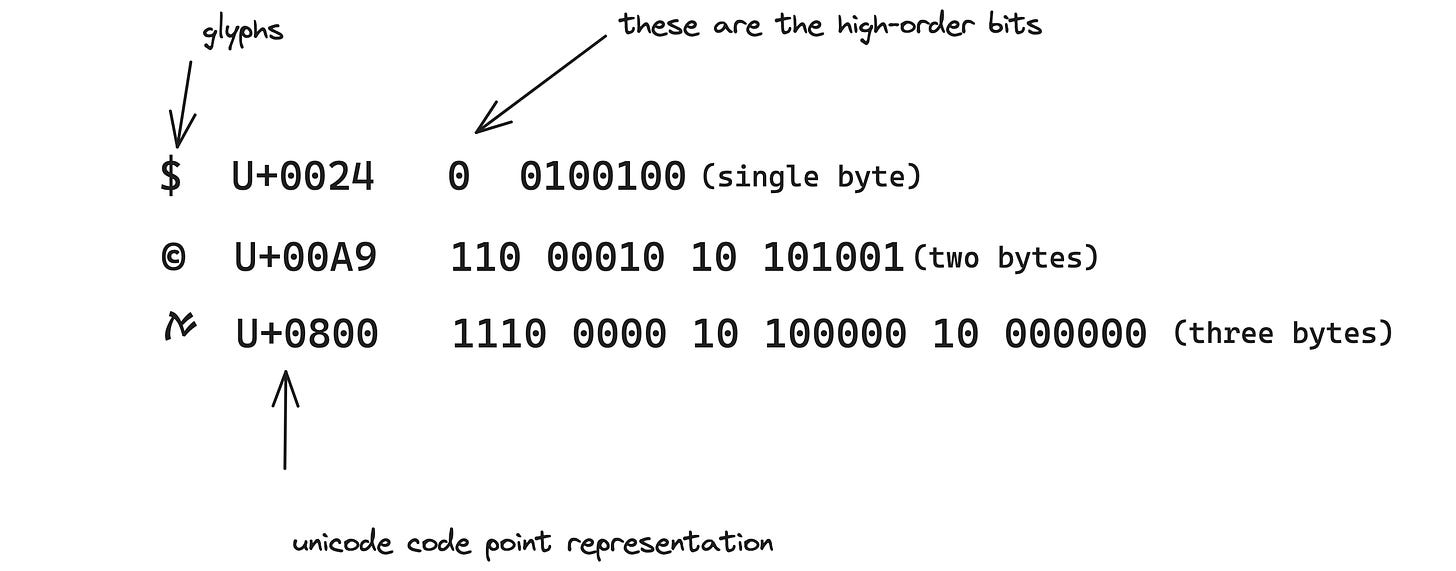

UTF-8 is a variable-length encoding of Unicode code points. It was created by Ken Thompson and Rob Pike, absolute nobodies, and uses 1 - 6 bytes to represent a letter. The high-order bits of the first byte would determine how many bytes are going to follow. If the high-order bit is 0 the that would mean it’s a l byte size (same as ASCII), If it’s 2 bytes then high-order bits are going to be 110, And the following bytes start with 10. For three bytes its 1110 … And it goes on like that.

For example:

Notice how all the following bytes start with 10. UTF-8 is fully compatible with ASCII, So characters encoded in UTF-8 would have the same byte sequence as those generated using ASCII( only for the first 128 characters).

There are also other different version of UTF encodings like UTF-7, 32 and there might even be more which I’m not aware of. UTF-7 is 7 bit representation, Like ASCII but the first bit is always 0. UTF-32 or USC-4 is a 4 bytes representation, Simple and uniform but would end up quadrupling the space required. This is what you would get if you were to represent runes as a sequence of int32 values. luckily GO’s source files are always encoded in UTF-8.

Now to answer my questions,

Well the problem isn’t really with character encoding, it’s with the glyph representation. So the way fonts work is they map glyphs (the shapes that we actually see) with unicode code points. Nerd fonts contain weird glyphs that are not part of regular fonts, So when my font comes across those weird characters it doesn’t know how to handle them even though the code points are accurately represented in memory.

And for the text limit thing, the limits are number of bytes not number of unicode points and since some unicode points like emojis take up more than a single byte they can reduce the text limit by more than one. This can be shown using runes:

func main() {

inputString := "Hello,ࠀ!"

runeCount := utf8.RuneCountInString(inputString);

byteCount := len(inputString)

fmt.Printf("Number of code points: %d\n",runeCount)

fmt.Printf("Number of bytes: %d\n", byteCount)

}The output would be:

Number of code points: 8

Number of bytes: 10If you want to know more about character encodings you there plenty of resources online for free, or if you’re a books guy like me, O’reilly's Unicode Explained is one of the better books out there, you can also use it as a reference in the future.

Thanks for reading.

The ࠀ character is actually Samaritan and not Runic. There are no runes that look like the above character.